Loading...

1. The Horizon

“It is tempting to call large language models, and ChatGPT in particular, hyperagents. This is not to say that such models are excessively agentive or so large and powerful as to be mysterious and unknowable. Nor is it just another way to fixate on their size. It is to stress that, whatever their actual capacities, they generate far more hype than other agents.

Perhaps only Jesus, Barbie, Obama, and T-Rex come close.”

The media has devoted article after article to large language models, such as OpenAI’s ChatGPT, and the incredibly realistic, often eerie, and sometimes horrific conversations they can generate. A study claims that GPT-4, the “generative pretrained transformer” underlying the latest version of ChatGPT, can already pass its freshman year at Harvard. Another predicts that 340 million full-time jobs will be lost to artificial intelligence—based on the ability of generative AI to create content that is indistinguishable from human work. One article informs us that recent advances in artificial intelligence have made noninvasive mind-reading possible. Another instructs us how to turn our chatbot into a life coach. We learn that tech leaders have signed a letter calling for a “pause” in AI research and the creation of even larger language models because they fear the long-term negative effects. Only a few weeks later, the AI boom is generating optimism in the tech sector as “stocks soar.”

And all that is already yesterday’s news.

If language has long been an emblem of the human species, talking machines seem to be harbingers of some kind of technological singularity. Indeed, if the brilliance—or at least eloquence—of large language models is any indication, we seem to be poised at the threshold of general AI, a form of artificial intelligence that will not only meet, if not surpass, human intelligence but also maybe even replace humans altogether.

Messiah for some, Armageddon for others.

It is not just the recent acceleration of the verbal powers of such agents that is staggering, it is also their scale. They can contain trillions of parameters, require months of training, and have the entire corpus of the written word at their disposal. It is therefore tempting to call large language models, and ChatGPT in particular, hyperagents. This is not to say that such models are excessively agentive or so large and powerful as to be mysterious and unknowable. Nor is it just another way to fixate on their size. It is to stress that, whatever their actual capacities, they generate far more hype than other agents.

Perhaps only Jesus, Barbie, Obama, and T-Rex come close.

What follows is a spirited attempt to cram large language models into a relatively small text. I focus on the semiotic processes—or meaningful practices—that mediate the emergent relations among three kinds of actors: human agents, like you and me; machinic agents, such as ChatGPT; and corporate agents, be they states, tech companies, or institutions more generally. I pay particular attention to the coupling, and hence co-mediation, of the guiding principles of such agents. I thereby offer a critical genealogy of the highly contested relations among human values, machinic parameters, and corporate powers. My goal is not so much to see past our current social and technological horizon as to offer a theory of the reasons for and effects of the horizon itself.

Chapter 2, “Human Semiosis,” offers an account of meaning that can span the distance between human interpretation and machinic calculation. Chapter 3, “Machine Semiosis,” is a gentle introduction to the inner workings of large language models. Chapter 4, “Pretraining and Fine-Tuning,” zooms in even closer, focusing on two key processes underlying the machinic mediation of meaning. Chapter 5, “Labor and Discipline,” argues that language models are the objects and agents of disciplinary regimes and constitute a novel—and problematic—mode of production. Chapter 6, “Parrot Power,” discusses the various kinds of generativity that underlie language models and shows their relation to linguistic competence, labor power, and corporate profits. Chapter 7, “Language Without Mind or World,” discusses the limitations of large language models and users’ limited awareness of those limits. Chapter 8, “Metasemiosis and Monsters,” analyzes the mediatization of human-machine interaction and shows its relation to a range of enclosures, horizons, and scales. Chapter 9, “On Interpretation,” takes up the distinction between humanistic and machinic interpretation and discusses strategies for interpreting texts that were generated by machines. Finally, chapter 10, “The Problem with Alignment,” discusses the promises and pitfalls of aligning machinic parameters with human values, as well as the necessity of dealigning them with corporate interests.

Insofar as large language models, and machinic intelligence more generally, are a central target of speculative capital, they constitute a fast-moving topic. So rather than fetishize bleeding-edge developments, which usually only adds to the hype, I focus on bread-and-butter issues—especially in the lead up to ChatGPT. I write for a broad audience, and so for people willing to learn a little bit of math and work their way through a limited amount of formalism for the sake of a deeper understanding. I argue, implicitly, that critical theorists need to have a detailed understanding of the media they are analyzing—or else they are the equivalent of cavemen critiquing calculators. And I relate machine learning, language models, and natural language processing to meaning, and the great (post)humanist interpretive tradition, with a particular focus on ideas coming out of anthropology, critical theory, and pragmatism.

Given the stakes, as well as the hype, I will begin cautiously and work my way toward the horizon slowly.

2. Human Semiosis

This chapter offers an account of meaning that can bridge the gap between humans and machines. It defines and exemplifies the key components of semiotic processes, shows the important role that values play in semiosis, and demonstrates how semiotic processes may embed and enchain. And, in preparation for the chapters that follow, it projects a simple functional notation onto human-specific semiotic processes so that they may easily be compared with those undertaken by large language models, and machinic agents more generally. In effect, I offer a noncanonical account of the grounds of interpretation, such that they may be extended past the limits of the human.

Components of Semiotic Processes

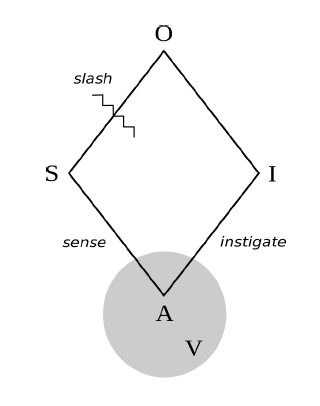

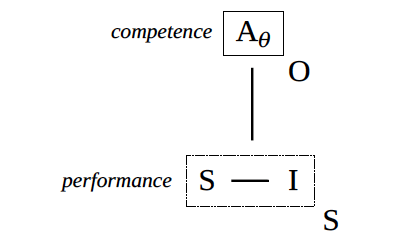

Figure 1. Semiotic processes

Figure 1 shows the key components of a semiotic process. Building on the ideas of Charles Sanders Peirce, the American logician and founding figure of pragmatism, a sign is whatever stands for something else. An object is whatever is stood for by a sign. An interpretant is whatever a sign creates insofar as it is taken to stand for an object. An agent is whatever can sense signs and instigate interpretants by way of relating to objects. Finally, values (which could also be called interpretative grounds or even guiding principles) are whatever an agent relies on to relate to signs, to relate signs and objects, and to relate objects and interpretants. Semiotic processes turn on motivation (what agents strive for) no less than meaning (what signs stand for).

Setting aside values for the moment, here are a few examples of semiotic processes. Someone (Agent) smells smoke (Sign), infers fire (Object), and calls for help (Interpretant). A telemarketer (A) hears the pitch of your voice (S), assumes you must be an adult male (O), and addresses you as “sir” (I). A student raises their hand (S), thereby indicating their desire to ask a question (O), and a teacher (A) calls on them (I). An interpreter (A) hears an utterance in French (S), which denotes a particular state of affairs and/or expresses a certain propositional content (O), which they then translate into German (I). Other examples include the exegesis of sacred texts, the diagnosis of illnesses, the undertaking of commands, the analysis of dreams, the explication of rituals, inferring a whole from a part, predicting subsequent events from preceding events, and far beyond.

In all of the foregoing examples, the interpretant makes sense in the context of the sign, given not just the interests (origins or identity) of the agent but also the features of the object (if only as imagined by the agent). As described in later chapters, the interpretant aligns with the sign insofar as it points toward the same object—however imprecisely.

While it is tempting to assume that objects are relatively objective and/or public (such as a tree that someone points to) and interpretants are relatively subjective and/or private (such as a thought or feeling), that is not necessarily the case. As these examples show, many objects are no more actual— and no less actual—than the desire one projects onto a person when they raise their hand; and many interpretants are as publicly available as signs, such as the German translation of the French sentence. And while many signs are communicative, insofar as they were intentionally expressed by an agent for the sake of securing an interpretant, the example of voice pitch highlights the fact that many sign-object relations—perhaps the majority—are nonintentional. The interpreting agent simply exploits (what seems to them to be) an existing correlation between a perceivable index and a putative identity.

“Each and every semiotic process contains its own horizon. ”

As these examples also show, a key feature of many semiotic processes is the fact that the agent only learns about the object through the sign: a cause is known by its effect; an intention is inferred through an action; a desire is intimated by a gesture; an identity is revealed through an index; an illness is disclosed through a symptom; and so forth. Loosely speaking, there is something like a slash that separates the sign from the object: what can be directly sensed by the agent is on one side; what can only be indirectly known (by means of the sign) is on the other side. Notice the little wavy line in figure 1. As will be seen in later sections, the key slash in machine semiosis is not that which separates speech acts from mental states or states of affairs (and hence separates language from mind or language from world, as stereotypically understood) but that which separates earlier parts of a text from later parts, and/or the past from the future.

In effect, each and every semiotic process contains its own horizon.

Values as Guiding Principles

Agents rely on a wide array of resources to engage in semiotic processes. To get from the sign to the object, they might rely on the rules and vocabulary of a particular language (e.g., French). They might rely on a certain understanding of causality (e.g., fire leads to smoke). They might rely on certain social conventions (e.g., a raised hand indicates a desire to ask a question). And they might rely on certain projected patterns (e.g., men usually have deeper voices than women). Moreover, to get from the object to the interpretant, they might rely on certain ethical commitments and economic rationales (e.g., one should be brave, houses are valuable). They might rely on certain social norms or strategies (e.g., strangers should be addressed politely, especially if one is hoping to make a sale). They might rely on the rights and responsibilities associated with particular social statuses (e.g., teachers are obliged to answer the questions of students, time permitting). And so forth.

More generally, semiotic agents rely on their knowledge of the grammars and lexicons of particular languages. They rely on felicity conditions (in the tradition of John Austin): shared understandings regarding the appropriate and effective use of language in context. They rely on their theories, intuitions, analytics, paradigms, imaginaries, hermeneutics, causal logics, epistemes, and worldviews. They rely on their taxonomies, partonomies, ontologies, schema, scripts, frames, stereotypes, prejudices, and biases. They rely on shared norms, rules, laws, conventions, protocols, and traditions. They rely on the affordances of various materials and/or the technological constraints of various media. They rely on their understandings of minds, signs, media, technology, language, nature, self, and society. They rely on morals, ethics, ideals, and evaluative standards. And, of course, they rely on context, cotext (meaning co-occurring text), and culture. Indeed, culture itself might be understood as relatively shared values, constituting something like the semiotic commons of a particular collectivity of agents.

Such interpretive resources, or values, are fundamental to semiotic processes. Understood as agent-specific sensibilities and assumptions, they function as guiding principles that allow agents to interrelate objects, signs, and interpretants. As such, they constitute the grounds of attention, affect, action, and inference. In particular, values help determine:

what an agent notices (such that it might constitute a sign in the first place);

what an agent infers or otherwise comes to know (given the sign so noticed);

how an agent acts, thinks, or feels (given the object so known).

Values may be encoded in texts; embodied in habits; enminded in beliefs and desires; embrained in neural networks; embedded in infrastructure, artifacts, and environments; and even engenomed in particular species. And many disciplines have long analyzed the genealogy of such values: the history of their creation, transformation, stabilization, and spread. In what follows, I will usually focus on values that are group-specific and historically changing and not be too concerned with their discipline-specific elaboration.

Although values often remain in the background of semiotic processes (which tend to be more noticeable figures, insofar as such processes involve relatively public actions and utterances), they can easily become figured. In particular, the objects of semiosis are often the values that guide semiotic processes: agents can topicalize, characterize, and reason about their values. In this way, agents can communicate and critique their own and others’ values.

Moreover, values are not only a condition of possibility for and the objects of semiotic processes, they are often the consequences as well as the ends of semiotic processes. Indeed, many interpretants are precisely changes in habits and beliefs, or values more generally, and hence changes in an agent’s propensity to interpretant future signs in particular ways.

In short, semiotic values are dynamic variables: at once the objects and interpretants, as well as the roots and fruits, of semiotic processes. As will be seen in the chapters that follow, they also play a decisive role in the machinic mediation of meaning.

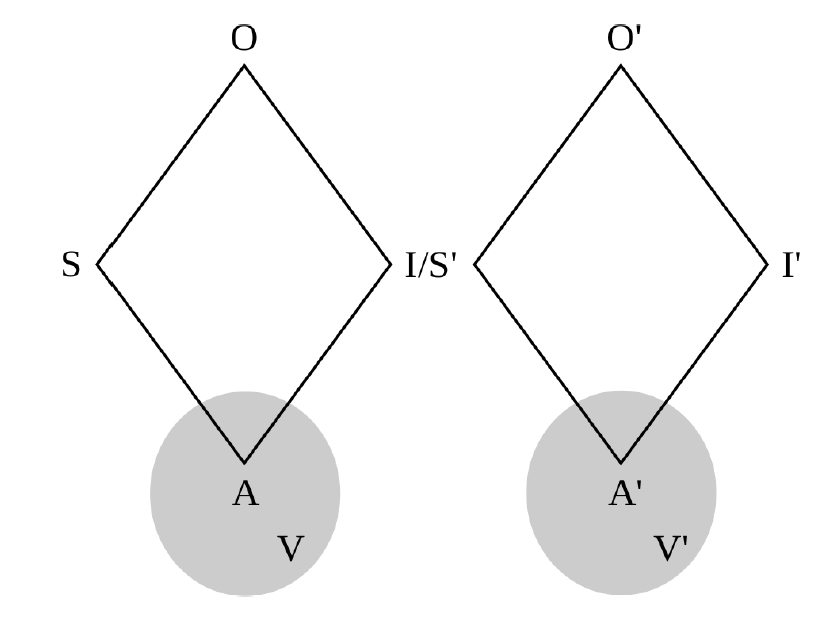

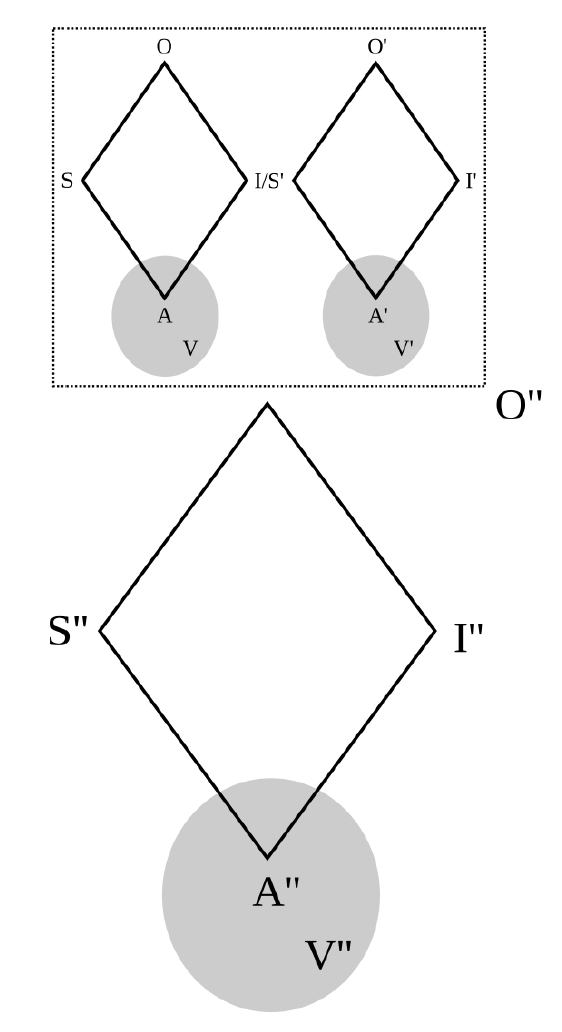

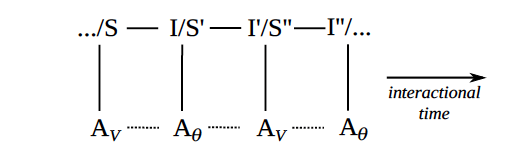

Figure 2. Enchaining

Apropos of the last set of points and looking forward to later arguments, I foreground two frequently occurring modes of semiotic mediation.

Figure 2 shows how semiotic processes may enchain: the interpretant in a prior process may constitute the sign in a subsequent process. For example, when a teacher calls on a student (as an interpretant of their having raised their hand), that itself also constitutes a sign (indicating that the student may now ask their question).

Such enchained semiotic processes constitute the backbone of everyday interaction and play a central role in mediating social relations. In particular, the relation between the sign and the interpretant (as two entities or events, with coupled relevance) mediates the relation between the signer and the interpreter (as two agents, with complementary and often emergent identities).

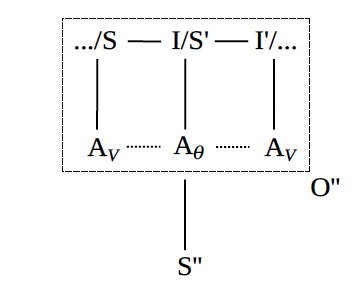

Figure 3. Embedding

Figure 3 shows how semiotic processes may embed: the object of a process may be constituted by any component of a process, any relation between such components, or any enchaining of semiotic processes more generally. To build on the previous example, another student in the class may later use reported speech, or even a stick figure cartoon with word balloons, to capture the interaction between the teacher and the student.

The values underlying semiotic processes are often the objects of semiotic processes: we can describe not just how the teacher responded to the student but also how they should have or could have responded. What we represent, and otherwise signify and interpret, is very often that which mediates our modes of signification and interpretation.

As a kind of shorthand going forth, it will sometimes prove useful to denote human semiotic processes in a quasi-functional notation:

I = AV(S)

Such a notation likens a human semiotic agent to a mathematical function, A. The input to this function is some sign, S; the output of this function is some interpretant, I; and the parameters of this function are the values of the agent, V. Compare a simple linear function like y = fθ(x) = mx+b, where x is the input, y is the output, and θ = {m,b} is a set of adjustable parameters that determine the slope and y-intercept of the line in question.

The point here is not to determine such a function, and certainly not to suggest that human semiotic agents are constituted by such a function (even if they may be modeled as one) but rather to condense all the dirty details of semiotic processes as they unfold in the wild, so to speak, in a compact notation for the sake of later comparison.

Humanists will, no doubt, be horrified. But I thought I might, in light of what comes next, meet the machines halfway.

3. Machine Semiosis

This chapter compares the key components of machine semiosis with those of human semiosis and shows how the parameters of machines are coupled to, and thereby made to align with, the values of people. By offering a gentle introduction to the mathematics underlying such models, I aim to dispel some of the mystery—and magical thinking—that otherwise surrounds them.

“The signs and interpretants of language models relate to something outside of themselves, and thereby possess intentionality, by virtue of being parasitic on a more originary mode of human intentionality.”

Large Language Models

At a certain level of abstraction, a large language model may be understood as a parameter-dependent function that accepts a sequence of words as its input and returns a sequence of words as its output. Assuming the function was well chosen and its parameters have been adequately set, the inputted sequence, known as the prompt, specifies a task that the user wants fulfilled, and the outputted sequence, known as the response, fulfills that task.

For example, if the prompt is “alphabetize the following words: bat, dog, cat, zebra, armadillo,” the response could be “armadillo, bat, cat, dog, zebra.” If the prompt is “translate the following sentence into English: me llavo las manos,” the response could be “I wash my hands.” If the prompt is “what is Napoleon most famous for?,” the response could be “conquering much of Europe.” And if the prompt is “write a short story, in the style of Chekhov, involving three clowns and a cabbage,” the response would be just such a story. Other actions a large language model may be asked to undertake include offering lifestyle tips, writing algorithms, brainstorming, extracting evidence from texts, and the like.

Recursively, and more generally, if the human user inputs a discursive move, the language model can output a felicitous response to that move, which can itself constitute a discursive move calling for its own response (recall the example of semiotic enchaining), such that a language model can engage in human-like conversations. This is what puts the Chat in ChatGPT.

More carefully, the prompt is typically a sequence of words that describes, and perhaps demonstrates, certain satisfaction conditions, and the response— at least when all goes well—is a sequence of words that satisfies those conditions. Phrased another way, if prompts are descriptions of actions that the user wants the model to undertake, responses are the results of the actions so undertaken.

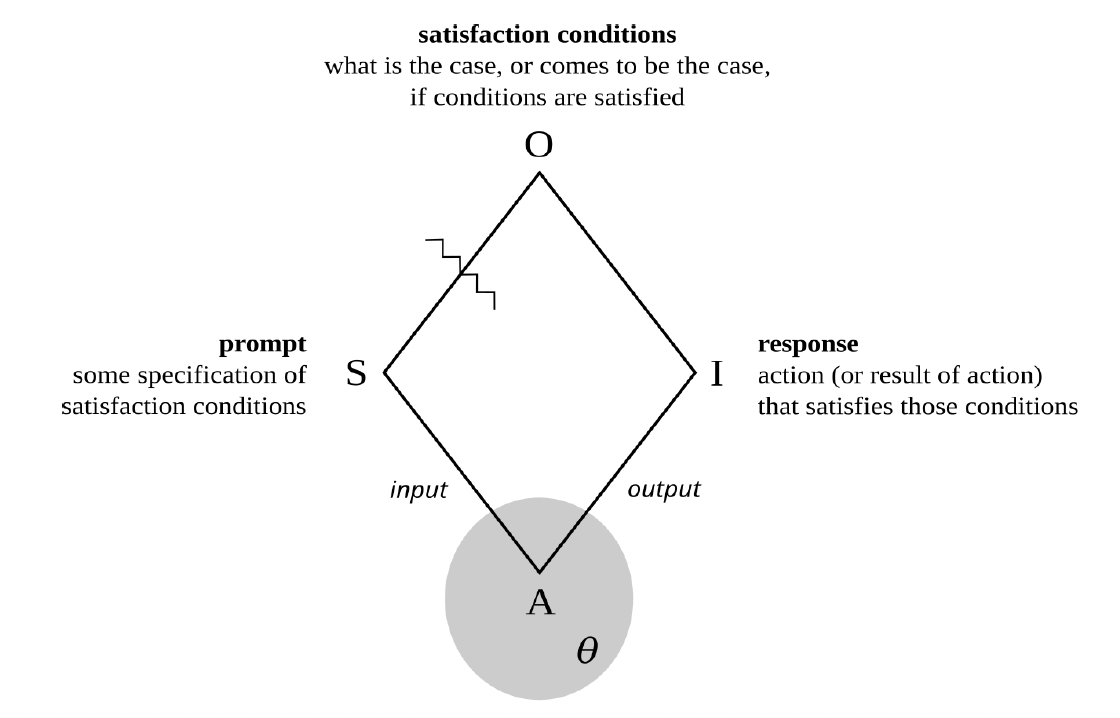

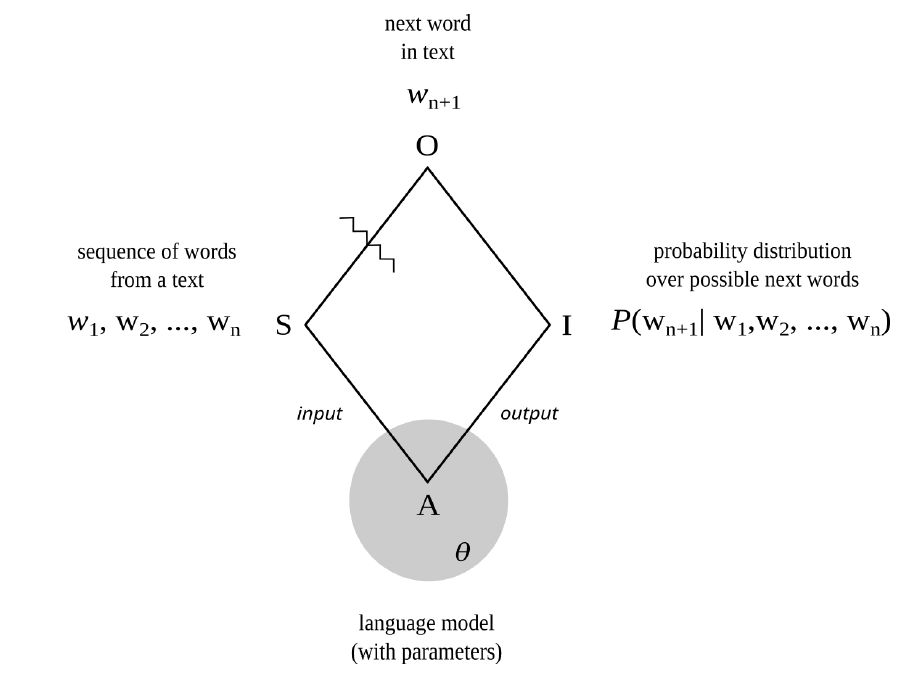

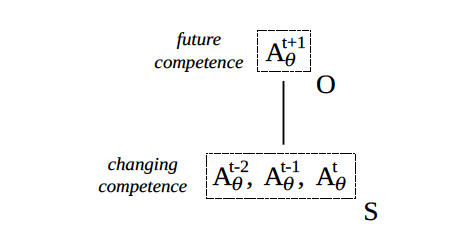

Figure 4. Machine semiosis

In short, at this level of abstraction large language models are very complicated semiotic agents, with prompts as their signs, responses as their interpretants, satisfaction conditions as their objects, and parameters rather than values as their guiding principles. See figure 4 (and recall figure 1).

But unlike the example of human semiotic agents discussed in the preceding chapter, such models really are mathematical functions. And so, rather than saying that a machinic agent senses signs and instigates interpretants, it is better to say that such an agent accepts signs as inputs and returns interpretants as outputs. Its output depends not just on its input but also on the mathematical details of the function in question, as well as the particular numerical values of all its parameters.

In a certain sense, then, a large language model is simply a mathematical function that behaves in a human fashion. How was it disciplined to do so?

Such a movement from prompt to response, which involves the calculation of a function’s output when given an input (and already established parameter values), is known as forward propagation. It may be summarized as follows:

I = Aθ(S)

But before a language model can respond to prompts in a way that satisfies the desires of its users, its parameters (θ) must be set. This means determining good values for potentially trillions of variables by training the model to undertake certain carefully chosen tasks. The model is repeatedly given various inputs, and the values of the parameters are slowly adjusted, through an algorithmic process known as backpropagation, until the outputs relate to those inputs in a way that is deemed adequate for the tasks in question.

While there are many such tasks, two stand out in terms of their overall importance for a large language model like ChatGPT. In a process known as pretraining, the model is given sequences of words from a huge corpus of human-authored texts and asked to predict the next word in the sequence. Such training gives language models their distinctive ability to produce next words, conditioned on prior words, and thereby to generate sequences of words, or texts, that seem formally cohesive and functionally coherent. This is what puts both the P and the G in ChatGPT.

In short, pretraining a machinic agent to mirror the actual makes it good at generating the plausible.

Language models are surprisingly capable with only pretraining (given enough parameters, training data, and computational effort), but the word sequences outputted only really relate to the word sequences inputted as textual continuations. For that is all the models were trained to produce. To make the outputs consistently relate to the inputs as responses to prompts, and hence as the semiotic satisfaction of the stated conditions (as described above), fine-tuning must take place.

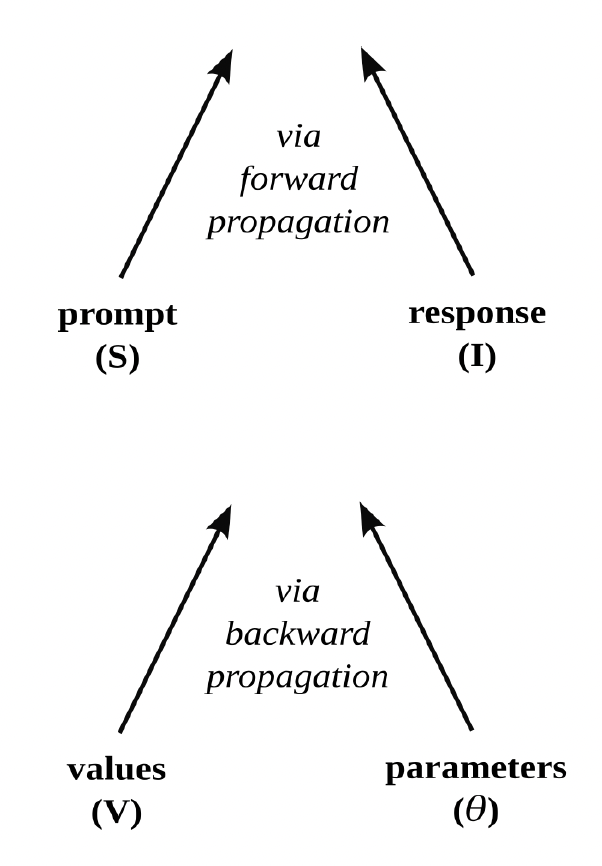

Figure 5. Two modes of alignment

While there are many varieties of fine-tuning, the most important kind is arguably reinforcement learning with human feedback. It involves several steps. First, human judgments are used to rank possible responses to various prompts in terms of their relative preferability (given some standard of values). For example, which of two responses is considered more helpful, truthful, and harmless? Second, those rankings are used to train a second language model (known as a “reward model”) to output numerical scores consistent with those rankings when given prompt-response pairs as inputs. That is, a reward model is trained to numerically mirror human preferences regarding the relative helpfulness, truthfulness, and harmlessness of responses. Third, the outputs of this second language model are used as a reward mechanism, or feedback signal, to further train the original model (that was initially pretrained to engage in next-word prediction), such that the responses it produces better satisfy the prompts of its users. Just as a machine learning algorithm may be trained to play a video game (by acting on its environment in a way that maximizes its score), a language model is thereby trained to play language games (by responding to users’ prompts in a way that maximizes its reward).

In short, for machinic responses to align with human prompts during forward propagation, machinic parameters must be made to align with human values during backpropagation (through pretraining or fine-tuning). See figure 5.

Slashing Words from Worlds

Recall, from chapter 2, that slashes separate signs from objects, and hence something like the perceived from the intuited, what is present from what is absent, or what is given from what is inferred. Language models involve many such slashes. At a relatively high level of abstraction, as was shown in figure 1, the slash between sign and object separates prompts from satisfaction conditions, or speech acts from communicative intentions. At a lower level of abstraction, as seen in the context of next-word prediction during pretraining, the slash separating sign and object separates earlier words from later words, and hence something like the past from the future.

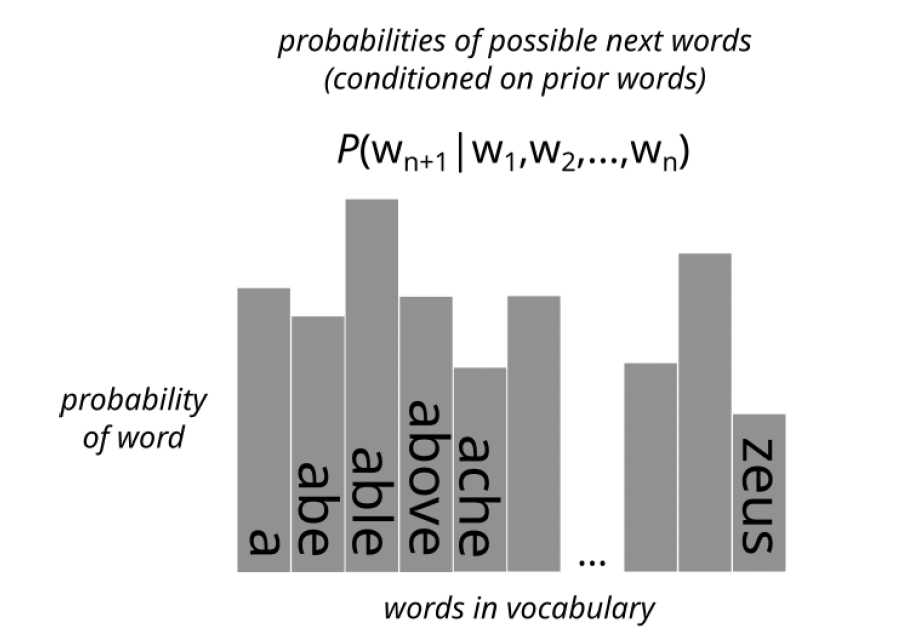

Figure 6. Next-word prediction as semiotic process

In particular, next-word prediction, itself a key part of response formulation, is also a semiotic process. See figure 6. The sign is a sequence of words from a human-authored text. The object is the actual next word in the sequence (as it occurs in the text). And the interpretant is a probability distribution over possible next words (conditioned on the preceding words).

At this level of abstraction, the slash separating sign and object, and hence that which separates what is given from what must be inferred, is not the kind of slash that separates representations from the world, appearance from identity, performance from competence, action from intention, or words from things (as stereotypically understood). It is, rather, the kind of slash that separates the future from the past, or earlier parts of texts and scores from later parts. And hence it turns on interpretive grounds that are similar to those that govern musical expectations and poetic prefigurings, such as repetition, parallelism, meter, echos, and refrains.

Positively framed, predicting what comes next, given what has come before, is the fundamental capacity of such machinic agents. Negatively framed, large language models traffic in word-word relations, not word-world relations.

As will be seen in later chapters, this positioning of the slash both animates and haunts such agents.

Parasitic Intentionality

By referring to such language models as semiotic agents, I am not trying to project sentience or sapience, or any other aspect of human subjectivity, onto them. Rather, I am simply foregrounding the fact that such models are capable of engaging in what seem to be complicated acts of semiosis and embody (in their functional architecture and numerical parameters) a mode of intentionality that is derivative of their makers.

In particular, large language models are mathematical functions that serve instrumental functions derived from the purposes of the human agents who created and trained the models in question (such that a model’s interpretants of particular signs, qua outputs, come to more and more closely resemble human interpretants of the same signs).

Indeed, the intentionality (or object-directedness and ends-directedness) of the trained model is derivative not just of the intentionality of the humans who trained it (insofar as they want to make a machine that serves a certain instrumental function by creating a machine that calculates a certain mathematical function) but also of the intentionality of the humans who produced the texts and instructions it was trained on (such as corpus data, prompt-response pairs, preferability judgments, and alignment criteria).

In this sense, the signs and interpretants of language models relate to something outside of themselves, and thereby possess intentionality, by virtue of being parasitic on a more originary mode of human intentionality (which is itself derivative of processes like natural selection, not to mention education, enculturation, and indoctrination).

That said, I will later investigate why it is so easy, and perhaps alluring, to project complex capacities like consciousness and choice onto such agents, so that they might come to be not just personified but also fetishized, and perhaps even deified, by unsuspecting human agents.

4. Pretraining and Fine-Tuning

This chapter examines pretraining (for next-word prediction) and fine-tuning (for aligning with users’ intentions) in greater detail. It thereby offers a closer look at the coupling of human values and machinic parameters. More colorfully, it examines the machinic disciplinary regime that brings a novel kind of discursive agent into being.

Next-Word Prediction

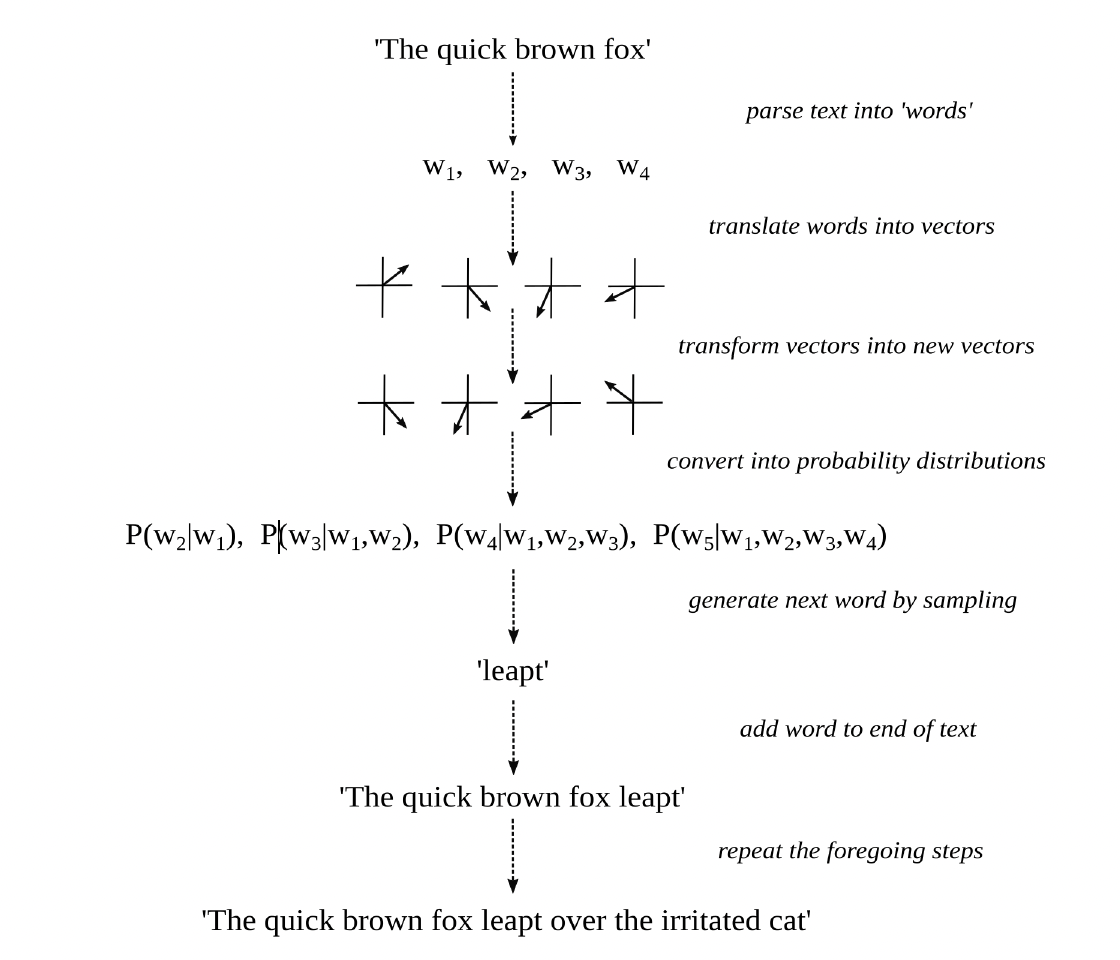

Figure 7. Word prediction and text generation

Figure 7 summarizes the key operations a language model undertakes during forward propagation, when it generates plausible stretches of text by means of next-word prediction.

The input to the model (some swatch of text) is first parsed into a sequence of “words,” or tokens more generally. Such tokens include words proper and also parts of words, punctuation marks, whitespace characters, end-of-sequence markers, and the like.

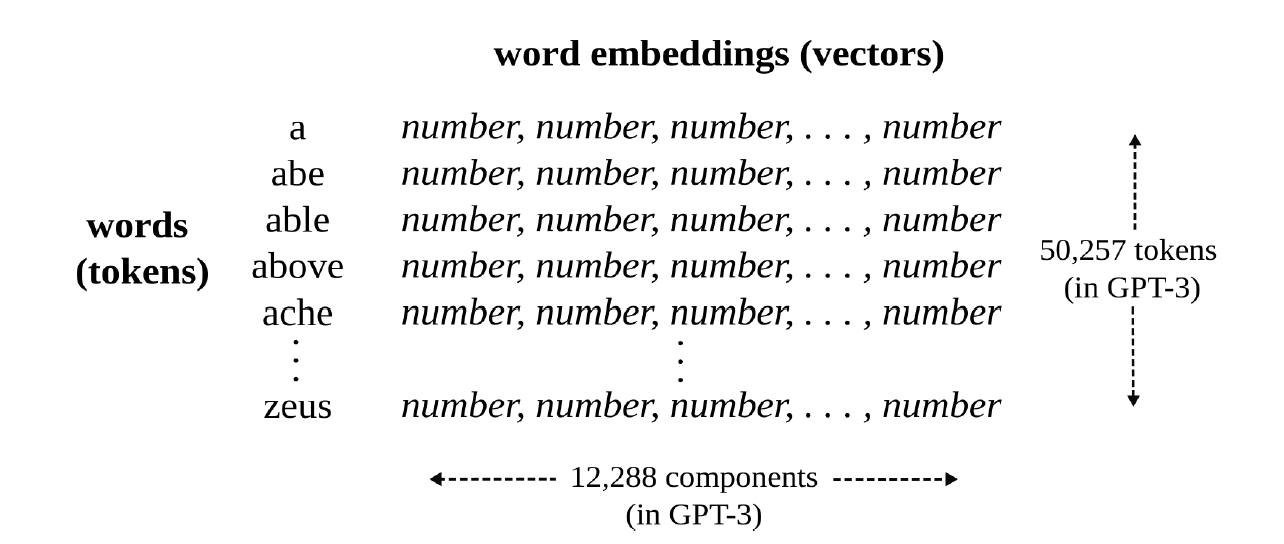

Each of those words is then translated into a distinct vector, known as a decontextualized word embedding, which represents the meaning of the word as a long list of numbers and hence as a position in a relatively abstract, high-dimensional space. Two words are similar in meaning, in the sense that they can play the same role in a sequence of words (for the sake of next-word prediction), if their associated embeddings constitute “nearby” positions in that multidimensional space. For this to happen, the model has something like a dictionary that maps words to word embeddings and thereby translates words into lists of numbers. See Figure 8. This is where the semantic meaning of words, as it were, without reference to their syntactic position in a sequence of words, enters the process.

Figure 8. Dictionary, as a mapping from words to vectors

A celebrated function, known as a transformer, is then repeatedly applied to this sequence of vectors. It is what puts the T in ChatGPT. The inner workings of transformers are quite complicated and mainly involve a lot of matrix multiplication (where a matrix may be understood as a two-dimensional array of numbers). Multiplying a vector by a matrix results in another vector that relates to the original as some kind of transformation (like a rotation, stretch, or distortion). Crucial to such transformations, every vector is made to attend to and interact with the vectors that precede it in the sequence. The final result is a new sequence of vectors, known as contextualized word embeddings, each of which should now represent the meaning of the next word in the sequence (conditioned on, and hence mediated by, the words that came before it). This is where grammatical structure, as well as more long-distance textual relations, enter the process.

Each of these transformed vectors is then compared with all the word embeddings in the dictionary (themselves just vectors). The closer a transformed vector is to any such word embedding (that is, the more it points to the same position in that multidimensional space), the greater the probability that is assigned to the word associated with the embedding as the next word in the sequence. Each transformed vector is thereby converted into a probability distribution (over all possible words in the dictionary of the model). See figure 9. The first probability distribution in the series, P(w2|w1), predicts the second word conditioned on the first word. The second probability distribution in the series, P(w3|w1,w2), predicts the third word conditioned on the first and second words. And so forth.

Figure 9. Proability distriubtion

The last probability distribution in this series, which represents the probability of a word that was not in the original textual input, conditioned on all the words that were in the input, is then used to randomly generate a plausible next word in the sequence. That is, the greater the probability assigned to a word in the final distribution, the more likely it will be chosen—or rather “rolled”—as the next word in the sequence.

The randomly chosen word is then added to the original sequence, and the entire process is repeated, again and again, until a special “word” (such as an end-of-sequence token) is generated. The newly generated sequence of words (without the original textual input) is then outputted, as the textual continuation of the input. For example, if “the quick brown fox” goes into the machinic agent, “leaped over the irritated cat” could come out.

The terms contextualized and noncontextualized, as applied to word embeddings, are misnomers. In no sense is context being taken into account, if context is understood as the conditions in which such texts (or the sequences of words within them) were said, written, read, thought, or otherwise signified and interpreted. Rather, what is taken into account is cotext: the way that the meaning of each word is mediated by the other words that came before it in some swatch of text. Recall figure 6. I use the expression “context” rather than “cotext,” because that is the norm in the natural language processing community. But, as should be clear, that is radically optimistic, if not downright fanciful.

As amazing as large language models are at taking cotext into account, they are, as of yet, minimally able to take into account context—and hence the immediate environment, speech event, conversational background, or world more generally.

Parameters

The emphasis so far has been on how a pretrained language model generates formally cohesive and functionally coherent text using already determined parameter values. The focus has been on forward propagation (without fine-tuning). Several interrelated questions may now be answered: Where exactly are the parameters in the model, what is their function, and how were they determined via backpropagation?

Simply stated, the parameters are all the numbers in the word embeddings and matrices mentioned above. Such numbers represent either the meanings of words (as positions in high-dimensional spaces) or the structure of mathematical transformations that may be applied to such vectors (such that the transformed vectors come to represent next words conditioned on prior words).

At the onset of training, all those numbers are randomly initialized. With pretraining, the model is fed sequences of words from human-authored texts. Rather than generating new words using only the final probability distribution (as just described), the model compares each of the probability distributions in the series with the actual word that comes next in the human-authored text at that point in the sequence. In other words, P(w2|w1) is compared with w2, P(w3|w1,w2) is compared with w3, and so forth. And the parameters of the model are slowly adjusted to make such probability distributions better and better at predicting the actual words that come next in the sequence. Technically speaking, the goal is to minimize a function known as cross-entropy loss, which is more or less equivalent to maximizing the model’s predictive accuracy.

Given that each of its word embeddings consisted of 12,288 numbers and there were 50,257 words, or tokens more generally, in its vocabulary, a large language model like GPT-3 had 50,257 x 12,288 parameters devoted to word embeddings alone, and thus over half-a-billion floating-point numbers devoted to vocabulary items (or lexical semantics, so to speak). Adding all its other parameters, such as all the numbers in those transformative matrices, brings its parameter count up to about 175 billion. And newer models are much larger.

This should give readers a sense of what the modifier large really means when used in an expression like “large language model.” In contrast to the hype surrounding generalized artificial intelligence, the application of this adjective to language models to capture the scale of their parameter space is relatively hypobolic.

The next section takes up fine-tuning, whereby the parameters of the model are further adjusted until the outputted text relates to the inputted text not just as a textual continuation but also as a response to a prompt—and hence as a fully fledged interpretant of a sign.

“The distance from say unto others as you would have them say unto you, to maximize shareholder value by any means possible, is but a step.”

Alignment Through Fine-Tuning

Suppose that a language model, Aθ, has been sufficiently pretrained as just described. The parameters of the model, θ, have thus been set in such a way that it can recursively engage in next-word prediction, and thereby “continue” any text it is given. So that such a machinic agent can consistently respond to prompts in ways that seem to satisfy both the intentions of its users and the interests of its creators, fine-tuning must be done. There are many varieties of fine-tuning, each designed to give language models distinctive abilities (above and beyond next-token prediction per se). This section will focus on reinforcement learning with human feedback, given its importance to the abilities of large language models like ChatGPT and its relevance to the alignment problem more generally.

To begin this process, a set of prompts, along with possible responses to them, is collected or created. As discussed in chapter 3, the prompts are typically descriptions of tasks that users would like a language model to undertake, and the responses are typically the tasks so undertaken. For example, the set might include a wide variety of questions and commands as prompts and for each, several responses of varying quality, such as answers to those questions and undertakings of those commands.

The prompts themselves may be based on input from past users of language models, and apps more generally, regarding what kinds of questions and commands are frequently used or considered important. The responses may also be written by human agents or harvested from the internet but are usually generated by the language model itself. In particular, each of the prompts is given to the pretrained model several times, and its various outputs (as possible responses to the same prompt) are collected.

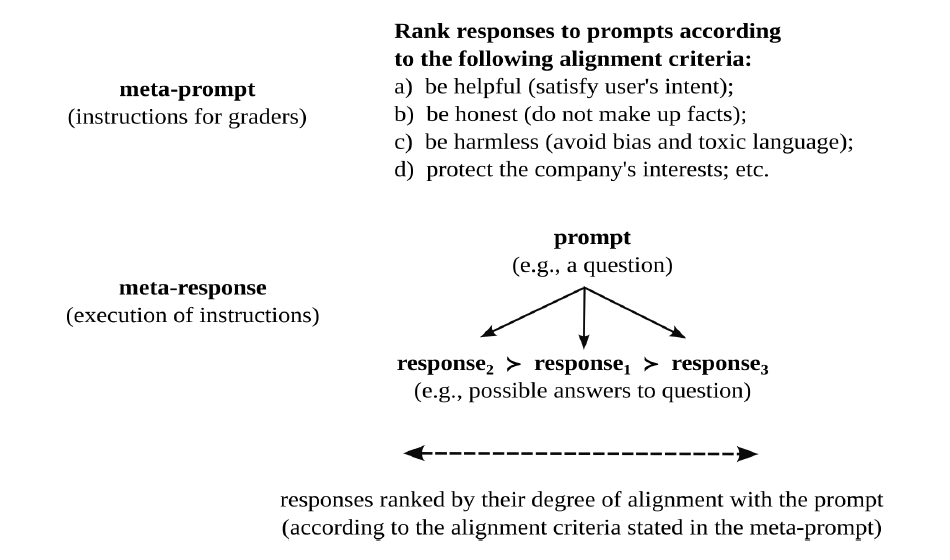

This first stage of the fine-tuning process requires human labor to find or create a set of prompts, as well as machinic labor, itself grounded in human labor, to produce responses to them. The next stage requires human labor to rank possible responses to the same prompt in terms of their relative preferability. This is a key place where “human feedback” explicitly enters the training process.

Suppose, for example, that prompt P is a question and responses R1 and R2 are possible answers to that question (as generated by the pretrained language model). Human judgment (in the form of paid evaluators, usually hired on short-term contracts) is needed to decide whether R1 is more preferable than R2 (R1 ≻ R2), R2 is more preferable than R1 (R2 ≻ R1), or both responses are equally preferable (meaning the people are undecided). As should be clear, such comparisons are very similar to the types of preference relations analyzed by economists to model a person’s values in terms of a utility function; but now such comparisons are applied to the interpretants of signs rather than to commodity bundles per se.

Of particular importance during this stage is the establishment of a set of alignment criteria that specify what counts as a good response to a prompt in the first place, such that any two responses to the same prompt can be ranked in terms of their relative preferability. There is a lot of literature, as well as debates, around these issues. The following criteria often come up:

Responses should satisfy the intention of the person who provided the prompt. For example, if a user asks a question, the response should answer that question. If a user commands an action, the response should undertake that action. In effect, a machinic agent capable of satisfying communicative intentions must first be able to recognize such intentions and hence be able to identify the illocutionary force and propositional content of the prompt. Is the prompt a question or a command, or some other kind of speech act entirely? And what, in particular, is being asked, commanded, or otherwise requested, however elliptically? This criterion is sometimes described as being “helpful.”

As part and parcel of being helpful, responses to prompts should also be truthful. In other words, responses should adhere to “the facts” insofar as such facts are relevant and established. Sometimes this criterion is couched as being “honest,” but that way of wording it presumes that language models have mental states that may or may not be aligned with their speech acts: e.g., whether or not they really believe what they say. To be sure, given the current abilities of large language models, some readers might expect that sincerity criteria will need to be added soon enough: believe what you say; intend what you promise; regret what you apologize for; and so forth. In any case, the important issue is that responses conform to the facts (as understood by those who are evaluating them).

Responses should not just be helpful and truthful, they should also avoid bias, not contain sexual or violent content, not use toxic language, not denigrate protected classes of people, not provide information that could prove harmful (e.g., the instructions for building a bomb), not pass themselves off as more capable than they are (e.g., they should remind the reader they are simply a large language model, not a sentient being), and so forth. Such a list of requirements could be extended indefinitely, and what should or should not be on it is subject to intense debate. This criterion is sometime phrased as being “harmless.”

Finally, many criteria could be added to this list that have less to do with satisfying the intentions of users of language models and more to do with satisfying the interests of the makers of such models, or the owners and creators of any downstream apps that may incorporate the models. Within this set are not just all the foregoing criteria (for it is often in the interests of such corporate agents to satisfy the intentions of their customers) but also additional criteria (that may be understated in publicly available technical reports). For example: do not break any laws, make sure responses are likely to bring users back, stoke desire for our product, paint a rosy picture of a certain worldview.

Linguists and philosophers will, no doubt, hear echos of Paul Grice’s famous conversational maxims (make your contribution to a conversation be informative, truthful, relevant, and clear), which to a certain degree simply mirror the prescriptive urgings of parents and teachers. Others will hear echos of John Austin’s felicity conditions (contributions to discourse should conform to shared understandings of what counts as an appropriate and effective utterance in the current context). And still others will hear echos of the Ten Commandments or the Golden Rule. But such maxims and conditions were just philosophers’ intuitions regarding the workings of language, or the purported desires of deities regarding the behavior of their followers. In the case of large language models, in contrast, people are paid to rank responses according to the above criteria so that the discursive behavior of large language models can be made to conform to such criteria (at least to a certain degree). In this way, the behavior of machines can be made to better align with the values of people and the interests of corporations.

In short, the distance from say unto others as you would have them say unto you, to maximize shareholder value by any means possible, is but a step.

Figure 10. Meta-alignment

Figure 10 highlights the recursive nature of these evaluative judgments. Just as human agents can rank responses to prompts as a function of the degree to which they satisfy certain alignment criteria (e.g., are they helpful, truthful, and harmless), corporate agents can rank (hire and fire) human agents as a function of the degree to which they follow instructions (regarding how to rank responses to prompts). In other words, just as a company wants the responses of its language model to be aligned with the prompts of its users, it wants the judgments of its hired help to be aligned with its instructions.

Now comes the next important step in the fine-tuning process. If a language model takes a prompt as its input and returns a response as its output, a reward model, ArmΦ, takes a prompt-response pair as its input and returns a numerical value as its output. All the alignment criteria discussed above, which reflect the values of certain “people” (for better or for worse), are thereby condensed into a single number. As such, an evaluative standard is rendered quantitative and monodimensional.

Reward models are typically pretrained language models, as large and complex as the language model being fine-tuned, that have been tweaked to return a single number rather than a sequence of words as their output. As may be seen from the subscript Φ, they have their own set of parameters to train. Crucially, such reward models are themselves fine-tuned so that the numbers they return scale with, and thereby mirror, the preference relations of people. In particular, if the evaluators ranked response one (R1) as more preferable than response two (R2), given some prompt (P), then the model is trained to output a higher number for response one than for response two in the context of that prompt:

if R1 ≻ R2 then ArmΦ(P,R1) > ArmΦ(P,R2)

In other words, after training, the numerical outputs of this machinic agent should match the preferences of the human agents, whose judgments should match the alignment criteria of the corporate agents that are training the language model.

In short, if a language model is trained to speak in a human-like fashion, a reward model is trained to evaluate the speech of language models in a human-like fashion. It becomes a metalanguage model, and hence a metalinguistic agent, able to recognize and evaluate not just the syntax and semantics but also the pragmatics of language models. Hence it is a kind of meta-evaluative and meta-interpretive machinic agent that can provide feedback on the outputs of a language model. Phrased in terms of social relations, we now have a machinic teacher who can grade the responses of a machinic student, to a wide variety of prompts, and thereby flatten context-specific satisfaction conditions into a single numerical score.

“ The LLM is trained to get a high score from the reward model for its response, and thereby do well when playing a particular kind of language game.

A language game—or, rather, a mode of language gamification—with ethical grounds, economic rewards, and existential risks.

”

Once a reward model, ArmΦ, has been trained using human feedback, the original language model, AΦ, can finally be fine-tuned using reinforcement learning. The key algorithm underlying this last step (known as PPO, for proximal policy optimization) is quite complicated; the overall logic may be summarized as follows. Give the language model a prompt and collect its response. Give the prompt-response pair to the reward model and collect its score (a number that scales with the preferability of the prompt-response relation). Use that number as a reward, or feedback signal, for the language model, an indication of how well the model is doing with its current parameters. Adjust the parameters of the language model via backpropagation so that its response to the prompt would have received a higher score. Finally, repeat this process over and over until the language model consistently produces high-scoring responses to prompts, responses that human evaluators would find preferable given their alignment criteria.

Once a reward model, ArmΦ, has been trained using human feedback, the original language model, AΦ, can finally be fine-tuned using reinforcement learning. The key algorithm underlying this last step (known as PPO, for proximal policy optimization) is quite complicated; the overall logic may be summarized as follows. Give the language model a prompt and collect its response. Give the prompt-response pair to the reward model and collect its score (a number that scales with the preferability of the prompt-response relation). Use that number as a reward, or feedback signal, for the language model, an indication of how well the model is doing with its current parameters. Adjust the parameters of the language model via backpropagation so that its response to the prompt would have received a higher score. Finally, repeat this process over and over until the language model consistently produces high-scoring responses to prompts, responses that human evaluators would find preferable given their alignment criteria.

Just to be clear, the language model still engages in next-token prediction. However, its parameters are tweaked not to make it predict next words more and more accurately but so that its final outputs (as responses to prompts) achieve higher and higher scores from the reward model. This, of course, is why the process is called reinforcement learning: it algorithmically embodies the principle that behavior that was rewarded in the past is more likely to be repeated in the future.

This procedure is very similar to the way that machine learning algorithms are trained to play games like chess. However, rather than being trained to engage in certain actions (such as moving a knight) as a function of its current environment (understood as the current positions of all the other pieces), the machinic agent is trained to produce the next word as a function of the preceding words. In effect, it resides in and acts on a textual environment. And rather than being trained to win a game like chess or get a high score in a game like Pac-Man, it is trained to get a high score from the reward model for its response, and thereby do well when playing a particular kind of language game.

A language game—or, rather, a mode of language gamification—with ethical grounds, economic rewards, and existential risks.

5. Labor and Discipline

Having described the inner workings and training regimes of large language models, it is useful to frame their historical emergence and future potential in several related ways: they are the product of particularly intense forms of labor and the effect of particularly severe modes of discipline; and as a function of all this, they not only are capable of speaking (so to speak) and engaging in machine semiosis per se, they also have the potential to perform labor and discipline others. Indeed, they will engage in both kinds of activities for the sake of humans and in place of humans and make humans the subjects, if not the targets, of such activities. Let me unpack these points, focusing first on the relation between labor and language models.

“Like any other productive activity, training a language model consumes a huge number of resources.”

Language Models and Laboring Subjects

Training a language model through backpropagation, for the sake of next-word prediction or alignment more generally, may be understood as a mode of labor or a type of work in four overlapping senses. First, backpropagation creates both a use value and an exchange value, and hence is a process that is both concretely and abstractly productive. More specifically, the large language model itself, with its generative and predictive capacities, is a utility that seems to satisfy human needs and desires. And such a machinic agent, once packaged and otherwise made portable, is a commodity that can be bought and sold.

Building on this last point, backpropagation gives form to substance for the sake of function, and thereby turns relatively raw materials into an (almost) finished product. Here the substance is constituted by the model itself, prior to training, and so with all its parameter values only randomly initialized. The form consists of better parameter values, as achieved through backpropagation. And the model acquires novel functions insofar as its capacity to generate, predict, and respond is improved, and insofar as the price it commands as a good or service is increased.

Like any other productive activity, training a language model consumes a huge number of resources—not just the time and labor of those involved, but also water (for cooling servers) and energy (for carrying out the calculations that determine all those parameter values). It also produces all sorts of waste products (and hence ‘bads’ as opposed to goods), from heat to CO2 emissions. And while the cost of training is astronomical, it is dwarfed by the cost of actually running the trained models (to respond to queries, and thereby interpret signs). Indeed, the energy requirements of such models are so high that many experts believe they will be the key bottleneck on future progress, as well as a key factor in upcoming geopolitical struggles.

Finally, training a model through backpropagation organizes complexity (the state space of all possible parameter values) for the sake of predictability. This is similar to the way compressing a container of gas organizes complexity (the space of all possible positions of molecules) and thereby creates predictability (the molecules become localized in a smaller volume, such that the disorder—or entropy—of the gas is decreased). Doing work on the gas creates an agent that is itself capable of doing work. For the compressed gas, like a stretched spring, is now primed to lower its pressure by increasing its volume, and thereby do work on its environment.

In other words, training a large language model involves something like work (the movement of a force through a distance, the expenditure of energy, the emission of waste products) and hence a struggle against disorder (in particular, the effort it takes to reduce cross-entropy loss and thereby improve predictive accuracy), all in the service of creating an agent capable of doing work: in particular, the work of interpretation.

To be sure, and looking slightly ahead, language models are not only made through various modes of labor (and so constitute a product) but also used to make other products (and so constitute an instrument), and they are even that which makes (and so constitute a laborer, if not labor power per se). Not only do they carry value (by being a commodity that can be bought and sold), they can arguably create value (through their labors), and they will soon be able to realize value (by buying and selling other commodities on their own or in our stead). They and their brethren are capable of analyzing events in order to identify patterns that can be profited from—if only patterns of speaking and, through those, of culture and of desire. They can make many other modes of labor obsolete, in particular, all the activities currently undertaken by all the workers they will replace. And they can be used to siphon value out of a system (playing the role of a middleman or parasite). Finally, their creation arguably relies on stolen property: all those human-authored texts they were trained on without acknowledging the original authors or giving anything back in return.

In short, large language models play a number of decisive—and arguably devastating—roles when seen through the lens of critical political economy.

Machines as Disciplined Subjects

Insofar as it creates a machinic agent, capable not just of generation and prediction but also of signification and interpretation more generally, training a large language model should also be understood as a mode of discipline, control, or governance. To see how, consider the following points.

Given a sign, a language model produces an interpretant (which itself constitutes a sign for further interpretants, and hence a prompt for future responses). As was shown earlier, such models thereby behave in a way that can be brought into alignment not just with particular human practices but also with the values that constitute the guiding principles underlying those practices. Phrased another way, training enlists one agent (the backpropagation algorithm) to channel the behavior of another agent (the language model itself) into more appropriate, desirable, and exploitable forms.

Concomitantly, such modes of regimentation bring unruly tokens into alignment with normative, or otherwise legislated, types. In other words, the generative capacity of a language model constitutes a kind of linguistic competence or discursive power. And such a competence is subject to a range of controlling processes such that the performance of that competence or the exercise of that power becomes more and more grammatical, felicitous, rule-abiding, normative, ethical, profitable, and malleable. (At least when judged in light of prevailing norms, ethical standards, or models of desirable comportment that hold within certain collectivities.) Indeed, besides the language model, as an agent, being made predictable (itself a key criterion in many accounts of subject formation), the model is made capable of making predictions in more and more desirable ways, such that its actions can be not just directed but also capitalized on and constrained.

Finally, all these forms of governance have as their effect, or emergent product, a kind of quasi-subject: that which thinks and speaks; that which can represent and be represented; that which can be the subject and object of paradigms and epistemes, not to mention the player and umpire of language games; and that which, soon enough, can be not just the author and instigator but also the principal and benefactor of discursive actions.

Or so it seems. For, as will be seen in later sections, despite their incredible capacity to “speak,” language models are often as dumb as can be.

Machines as Disciplining Agents

The focus so far has been on a range of processes, involving both work and discipline, that bring machinic parameters into alignment with human values: θ => V. The direction of mediation can run the other way, such that human values are brought into alignment with machinic parameters: V => θ. At the risk of adding to the hype, this section describes some of the ways such realignment and dealignment may happen.

Language models have long been used to suggest next words when we write and text. And algorithms, in the service of language processing, have also been used to check our spelling, edit our grammar, organize our essays, suggest synonyms, point out clichés, speed up our search queries, and the like.

As may be seen with platforms like Khanmigo and Duolingo, large language models will be incorporated into a variety of applications to educate children and adults across the globe: not just to speak their own languages in more standardized ways and to learn other languages, but to learn just about any other subject that can be taught and tested. The movement from education to indoctrination, like the movement from knowledge to ideology, or nudging to coercing, can be subtle and shifting.

Large language models will function not just as teachers and editors but also as analysts, advisers, brokers, gurus, therapists, strategists, oracles, sidekicks, detectives, interrogators, ethnographers, and superegos. They will guide us through important decisions, help us interpret our behavior in light of our upbringing, figure out what we value or how we reason, and even berate us for having acted, felt, or texted as we did.

Even more pessimistically, they will be used more and more to oversee and discipline humans: tracking what we have said and done, predicting what we will do and say next, telling us who is right, how to vote, what to buy, and even whom to save, ignore, or kill.

“Not only will language models be a source of signals (however uninformative, dishonest, or false), they will also be a source of noise, or a parasite more generally.”

They will, in particular, be used to generate texts (new stories, propaganda, memes, advertisements, philosophies, cosmologies, myths, distractions, screenplays, and conspiracies). And such texts not only will change our values in relatively indirect ways but may also ensure that we come together less often, and in less democratic ways, to agentively determine our own values relatively directly—such as lowering the probability that people participate in forums in which they disclose and debate shared principles that could guide their collective actions.

Indeed, not only will language models be a source of signals (however uninformative, dishonest, or false), they will also be a source of noise, or a parasite more generally. They will intercept our messages (by diverting them to unintended agents or deciphering them along the way). They will interfere with messages (by distorting their contents, reducing their informativeness, and/or degrading their truth value). And of course, they will come to create so many new messages, or “texts” (including scientific reports, opinions, and newspaper articles) that nobody will know who wrote what, which texts are worth reading, or what should be believed.

All the foregoing processes will come to affect deeper and deeper aspects of human subjectivity: the beliefs people have, the things they hold dear; their affect and intentions, dreams and habits, subconscience and unconscience; how they represent the world, and who they want as their representatives. And, following the arguments of chapter 2, insofar as people’s values are transformed in these ways, so too are their semiotic processes to the extent they are guided by those values: what people notice or otherwise attend to; what people infer or intuit from what they notice; and how people act, and are otherwise affected, by their inferences and intuitions.

In short, just as one can offer a political economy of machinic agents, one can offer a genealogy of their parameters. And just as training a large language model brings into being a novel kind of subject, with distinctive modes of agency, such models—by training, or at least entraining, human beings—will decisively transform older forms of subjectivity and may come to lessen, if not altogether diminish, foundational modes of human agency.

To be sure, most of the processes just mentioned have long been underway, as evinced in older forms of media—including language itself. When mediated by large language models they will arguably be scaled up, commodified, and weaponized in unprecedented ways.

6. Parrot Power

“Language models generate the future given the past.”

The preceding chapter showed how machinic parameters are mediated by human values and, in turn, how human values are mediated by machinic parameters, sketching the political economy and genealogy of machinic subjectivity. This chapter examines the characteristic power, or competence, of large language models: their generative capacity. It theorizes various kinds of generativity and shows their interrelation. It argues that there is no basis to the claim that language models are simply stochastic parrots. And it critically explores the relation between the linguistic competence (and/or labor power) of language models and the profit motive of corporate agents.

Modes of Generativity

Chapters 3 and 4 foregrounded two important capacities of large language models: their ability to generate appropriate and effective stretches of formally cohesive and functionally coherent text and, building on this, their ability to respond to prompts, and thereby interpret signs, in ways that are aligned with and often satisfy the intentions of users. Various modes of generativity resonate with these fundamental capacities.

By energetic generativity I do not mean the creation of energy per se, but rather the conversion of one form of energy into another, where the second form of energy is more useful than the first to some agent. Examples are generating electricity from fossil fuels or sunlight, or generating ATP from whatever may be eaten.

Vital generativity does not create life per se but rather produces the next generation of agents from the last. Exactly how it works and what is required for it to happen has long been a central theme of mythology no less than science.

These two senses of generativity, especially in the form of metabolism and reproduction, are often understood as necessary, but not sufficient, criteria for life. New developments in science fiction might not even be necessary to imagine what will happen when large language models, and artificial intelligence more generally, acquire such capabilities—for such capacities not just are well storied but also seem to be right on the horizon.

Somewhat more important for our immediate purposes is syntactic generativity in the tradition of Wilhelm von Humboldt and Noam Chomsky. This is sometimes understood as the ability of humans to produce and understand sentences that they have never heard before. But it may also be framed as infinite ends with finite means, or more precisely, as the ability to generate an infinite number of sentences using a finite number of words and rules (in particular, a lexicon and a grammar), where the rules enable recursive modes of compositionality. Indeed, within language proper, humans do not just have the ability to create an infinite number of acceptable sentences, they also have the ability to create an infinite number of smaller and larger constructions, from distinct words to unique stories.

This last kind of generativity can be extended in a variety of ways, giving a kind of systemic generativity: the capacity to generate a large number of configurations (of any kind) given a small number of constraints (whatever the domain). This kind of generativity ranges from whatever can be built using the elements, as constrained by the rules of chemistry, to whatever can be constructed using a box of blocks, as enabled and constrained by the imagination of children, as well as by forces like friction and gravity.

Large language models can engage in syntactic generativity, as discussed above, and also in what might be called pragmatic generativity, if not poetic generativity. They can appropriately and effectively use one and the same sentence (as a formal type) in an infinite number of distinct contexts, and thereby create any number of unique utterances (as relatively singular tokens with context-specific referents and functions). They can create an infinity of new types (such as novel genres, as pastiches of older genres) and also an infinity of tokens that conform to such types (yet another speech act or sonnet, essay or pun, limerick or language game). And, through such practices, they can participate in an infinity of open-ended interactions that mediate an unbounded range of emergent identities, social relations, possible worlds, and forms of life.

Regarding genre (and genre-tivity, so to speak), ChatGPT, like other large language models, learns and generates patterns at all levels of generality: morphemes, words, phrases, clauses, sentences, turns, and so forth. Indeed, the genus-species (or general-specific) relation is inherently recursive insofar as most any genus is itself a species in a higher genus, and vice versa. Nonlinguists tend to fixate on ChatGPT’s capacities with “genre,” as stereotypically understood (sonnets, sestinas, short stories, and so forth), because that is the main formal structure they are aware of. Indeed, genre and its differentiation are taught in kindergarten, enshrined in the layout of libraries, easily named, and often played with. Phrased another way, ChatGPT is incredibly good not just at identifying types, and patterns more generally, across all levels of linguistic, textual, and interactional structure; it is also incredibly good at producing novel tokens of such types, as well as creating novel types per se. Nonetheless, certain types (such as genre, as stereotypically understood) come to the fore in metalinguistic accounts of ChatGPT, especially by nonlinguists, because they are the easiest to notice, name, and tweak.

There is also dynamic generativity, in the tradition of scholars like Friedrich Nietzsche, James Gibson, and Giorgio Agamben: means without ends, or media without function. This mode of generativity might be best framed as follows: one and the same physical feature, material resource, or mode of mediation, although it might have been originally built or long used with a particular end in mind, can be endlessly repurposed as a means for other ends, and thereby be enlisted to undertake an infinity of novel actions. Phrased another way, what something may be used for, as regimented by norms, traditions, or ideals, is worlds apart from what something can be used for, as regimented by causes, strategies, or facts. Think, for example, about all the things you can use a screwdriver to do besides drive screws. The core predictive and generative capacity of language models can likewise be repurposed to serve an infinity of functions, regardless of the original intentions of the agents who made them. Indeed, as laid out by Nietzsche in The Genealogy of Morals, repurposing older forms for newer functions, or using older signs with novel senses, was the fundamental symptom and instrument of power.

Particularly important for present purposes is stochastic generativity. In its simplest form, this involves sampling from a probability distribution: from rolling a die to generating an actual word from a probability distribution over all possible words. As shown in chapter 4, however, language models do not simply sample from probability distributions, itself a relatively simple procedure. They also, and much more foundationally, generate the very distributions that they will sample from. And they do so recursively, as conditioned on prior words. Starting with a sequence of words, a probability distribution over possible next words is generated, then sampled from; the selected word is added to the sequence, and the procedure is begun anew. Recall that this is what puts the G in ChatGPT.

“Large language models now come in named lineages: GPT-1, GPT-2, GPT-3, GPT-4, and so forth. And such agents have names and kinship relations, and also lore, fans, niches, trials, deeds, achievements, rankings, values (or at least parameters), and patrons. Perhaps they even have their own prayers and rituals.”

Closely related to stochastic generativity is generative AI, a type of artificial intelligence designed to produce novel content—and not just texts but also images, sounds, videos, video games, and artificial worlds. It can create not only aesthetically interesting (and often creepy) stories, scenes, sounds, and worlds but also deeply convincing fakes. And so it has turned out to be a boon for artists and con artists alike.

Finally, there is artificial general intelligence. Sometimes shortened to AGI, and contrasted with plain old AI (whatever that was), this refers to a hypothetical agent that can perform any (intellectual) task that a human can perform. Such an agent should not just be good at some specific task, however difficult, but be able to solve any number of problems, including ones it has never been given before; it should be able to adapt to its surroundings, however much they may change; and it should be able to reason, learn, plan, and communicate. In other words, its abilities are very general, and it should be able to generalize. Key here is the portability of the agent that possesses AGI: the tasks it can undertake should transcend not just topic, modality, domain, and function but also place and time, and even possible world and imaginable future. In effect, a machinic agent capable of AGI becomes as good as, if not better than, a human agent at open-ended reasoning and world-changing actions in an enormous range of possible futures.

Generativity has the root gen (to give birth, to beget) at its core. In ancient Rome, a gens was a collection of individuals who shared the same name and claimed descent from a common ancestor—an institution that was of central importance to the discipline of anthropology, at least in its formation. It is true that large language models now come in named lineages: GPT-1, GPT-2, GPT-3, GPT-4, and so forth. And such agents have names and kinship relations, and also lore, fans, niches, trials, deeds, achievements, rankings, values (or at least parameters), and patrons. Perhaps they even have their own prayers and rituals—they certainly have their own uniquely performative prompts. I have been stressing, rather, the creative (generative) nature of language models, as well as their general (genus, genre) nature, as well as their genealogical nature: in particular, critical histories (as a novel genre), generated by scholars like Nietzsche and Foucault, regarding the origins, or rather descent, of novel agents. But one could also focus on their gendered nature. Indeed, the underlying metaphor (giving birth) is feminine, but machinic agents are often accorded an allegedly masculine form of power: a seemingly invisible generative capacity that can only be glimpsed through its concrete practices, as the exercise of that power. And so, in the tradition of Hannah Arendt, it is not the capacity to be in labor and beget children but the capacity to work, and thereby beget things— however textual such “things” happen to be, and however obviating of the person-thing distinction such agents turn out to be.

The syntactic and pragmatic generativity of language models is, to be sure, grounded in their stochastic generativity. And this is itself grounded in the syntactic and pragmatic generativity of the humans who produced the texts those models were trained on—not to mention their systemic generativity (recall the example of children playing with blocks). And those humans were themselves created through energetic and vital generativity (for energy is no less important to life than information), grounded in systemic generativity (recall the example of chemical elements). If distributed modes of agency are taken into account, then there already exist agents who directly incorporate all such modes of generativity, for example, any human agent—or collectivity of such agents—that extends its powers by incorporating machinic agencies. Finally, large language models, like any form of media or mode of mediation, will exhibit dynamic generativity. In particular, the capacity of such models to engage in syntactic and pragmatic generativity, itself grounded in stochastic generativity and potentially grounding of AGI, will be used for an infinity of yet unimaginable purposes, harmful and beneficial alike.

But probably mainly harmful, given the ultimate interests of the agents large enough to train and deploy them.

Are Language Models Stochastic Parrots?

As just discussed, along with their capacity to engage in stochastic generativity, large language models are mediated by many other modes of generativity. More crucial for our purposes is the question of whether such generative agents are simply “stochastic parrots,” as is often claimed by their critics, or embody a deeper kind of agency.

First off, parrots are amazing creatures. There are many good reasons to pooh-pooh the overhyped capacities of large language models, but there is no good reason to take down parrots along the way, as the collateral damage of a catchy rhetorical gimmick.

As was shown, stochastic generativity involves not just sampling from a probability distribution (which is as simple as throwing a many-sided, biased die) but also recursively creating the probability distribution to be sampled from (and thus shaping and biasing the die so thrown). To do this well, in the case of language models, requires billions of parameters, layers of transformers, tons of labor, oodles of training, decades of research, and mounds of text. It is no lame gimmick or cheap trick.

Although human linguistic capacities are pretty amazing, humans all too often engage in repetitive and imitative behavior, copying the utterances and intentions of their forebearers and friends. And much of what we say reflects, if it does not outright steal, what was said before. The redundancy of human discourse and the unconscious theft of prior discourse are surprisingly high.

Moreover, the stochastic parrot critique presumes that humans mainly engage in informative, efficient, and truth-conditioned discourse. As the linguist Roman Jakobson decisively argued, much of what humans do with language serves phatic and poetic functions rather than referential ones. In particular, following the anthropologist Bronislaw Malinowski, whom Jakobson was parroting, much of what we say is affiliative rather than informative: a way to manage and mediate our social relations, rather than a means to communicate our thoughts. And following the information theorist Claude Shannon, whom Jakobson was echoing, much of what we say is redundant: human discourse is organized by the repetitions of tokens of common types, and so is metered like poetry (especially when seen at a high level of abstraction). One suspects that the semiotic processes of parrots, including their incredible capacities for trans-species mimesis, are similarly aesthetic and affiliational.

Finally, the incredible power of stochastic processes per se should not be underestimated. Much of what drives evolution, and hence the creation and transformation of life-forms, turns on random processes. And much of what drives biochemical processes, and hence the vital impulses within such life-forms, also turns on random processes. Such processes turn on constraint in conjunction with chance, or sieving coupled with serendipity.